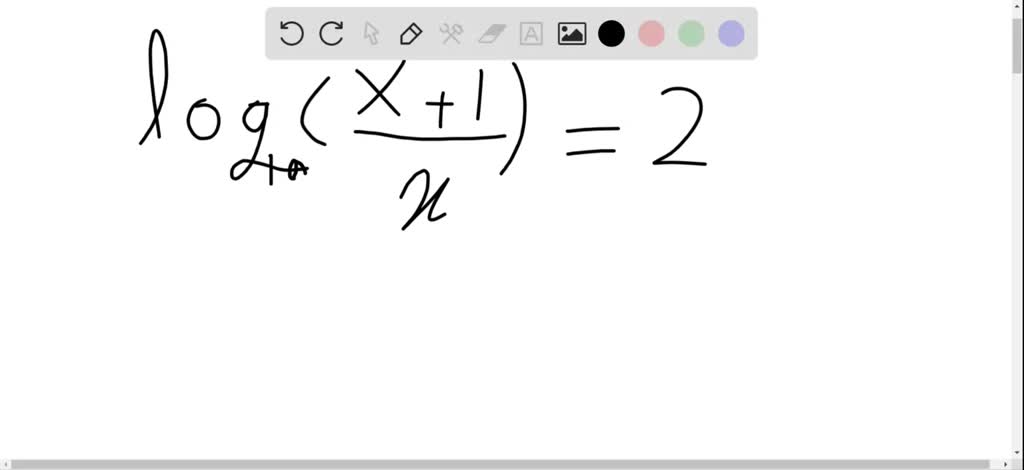

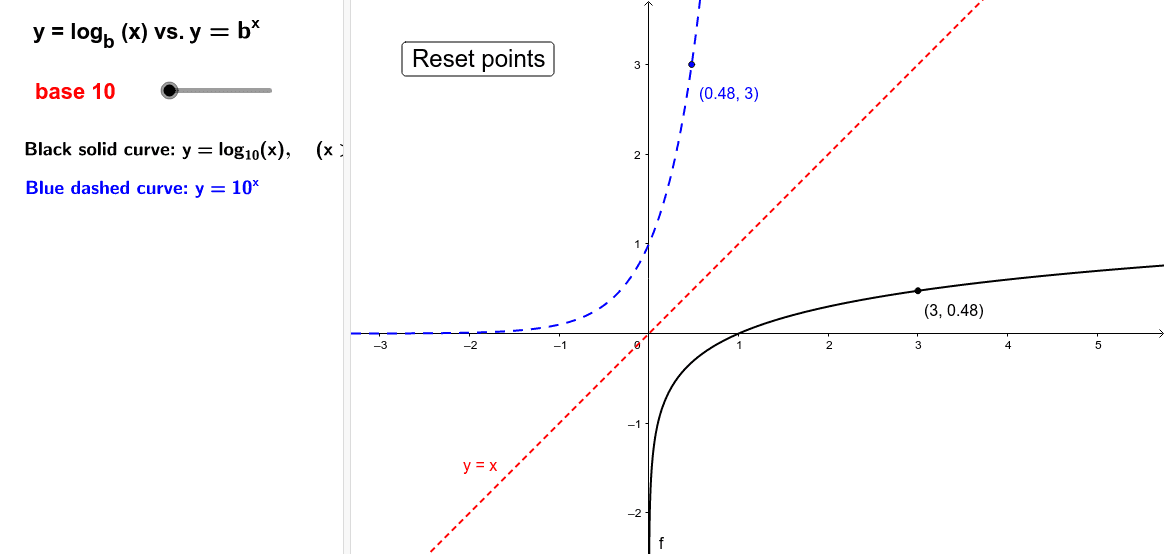

When something grows logarithmically, it is being divided. When you say something grows exponentially, it’s being multiplied. The question logarithms answer is: “What power do we raise 3 to to get 9?” The answer, of course, is 2! log3(9) = 2Ī logarithmic function is the opposite of an exponential function. Logarithms are used to solve for x when x is in the exponent.

If you haven’t thought about algebra since high school, you’ll want a refresher on just what the heck a logarithm is. “A quantity representing the power to which a fixed number (the base) must be raised to produce a given number.“- Lexico But what about that blue logarithmic line? That looks almost as good as constant time! The orange quadratic spikes up super fast while the green constant stays the same no matter the input. Looking at this figure, you can see how each of the four runtimes scale. O(n²) is almost never an acceptable time complexity and you can usually avoid it with a clever algorithm of some kind. If your list has four trillion letters, it may never finish running! If your list has two letters in it, your program will take four operations to run. Since for each item in the list you have to also go through the whole rest of the list, the number of operations your program has to do is the number of inputs (or n) times itself (n squared).

Now, say you want your program to add every member of the list to each other. O(n) is a decent time complexity and you often can’t avoid it. If the program is adding each number to itself three times instead of two, it will still be O(n) because even though your program is doing more operations per input, how much more is constant per input. It could be running on an old laptop or a super computer and take a very different amount of time to run. Your input could be two letters or it could be twelve billion. Does that say anything about how fast it will run in practice? Not really. You could say that its runtime is Order n because the number of operations it has to do is proportional to how many letters are in your list. If your program does something like duplicate all the letters like so: => įor each letter, the letter is duplicated. O(1) is the best possible time complexity! Data structures like hash tables make clever use of algorithms to pull off constant time operations and speed things up dramatically. Its time complexity is simply 1 because it doesn’t matter how many letters are in the list, it will always take just one operation. If your program wants to remove one letter from your list like this: => Since there are five of them, n here would be equal to 5: n=5. Let’s say we have a list of five letters: There are others like n log n and n factorial but we’ll leave those out for now. I’ll go over four important categories of big-O notation. That’s a pretty wordy definition so let’s break it down. “Time complexity is the computational complexity that describes the amount of time it takes to run an algorithm.” - Wikipedia Much like code itself, the parts are simple, but these parts add up to something unfathomably complex. In my experience, all the computer science concepts the average programmer needs to know are not hard to understand on their own. Now, I have nothing against math classes, but you definitely don’t need them to write good code. This concept is one of the reasons higher education thinks it needs to make computer science undergrads take years of math classes.

It defines how much memory a program might use but we’ll leave that for the next article. It’s a super important concept to understand, at least on an intuitive level, in order to write fast code. That’s time complexity analysis or big-O notation! If you’re new to computer science, you’ve probably seen a notation that looks something like O(n) or O(log n).

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed